Allegedly, 30% of all web pages are now WordPress. I’m guessing most of these WordPress sites aren’t typical blog sites, but there sure are many of them out there.

Which makes it so puzzling why Google and WordPress don’t really play together very well.

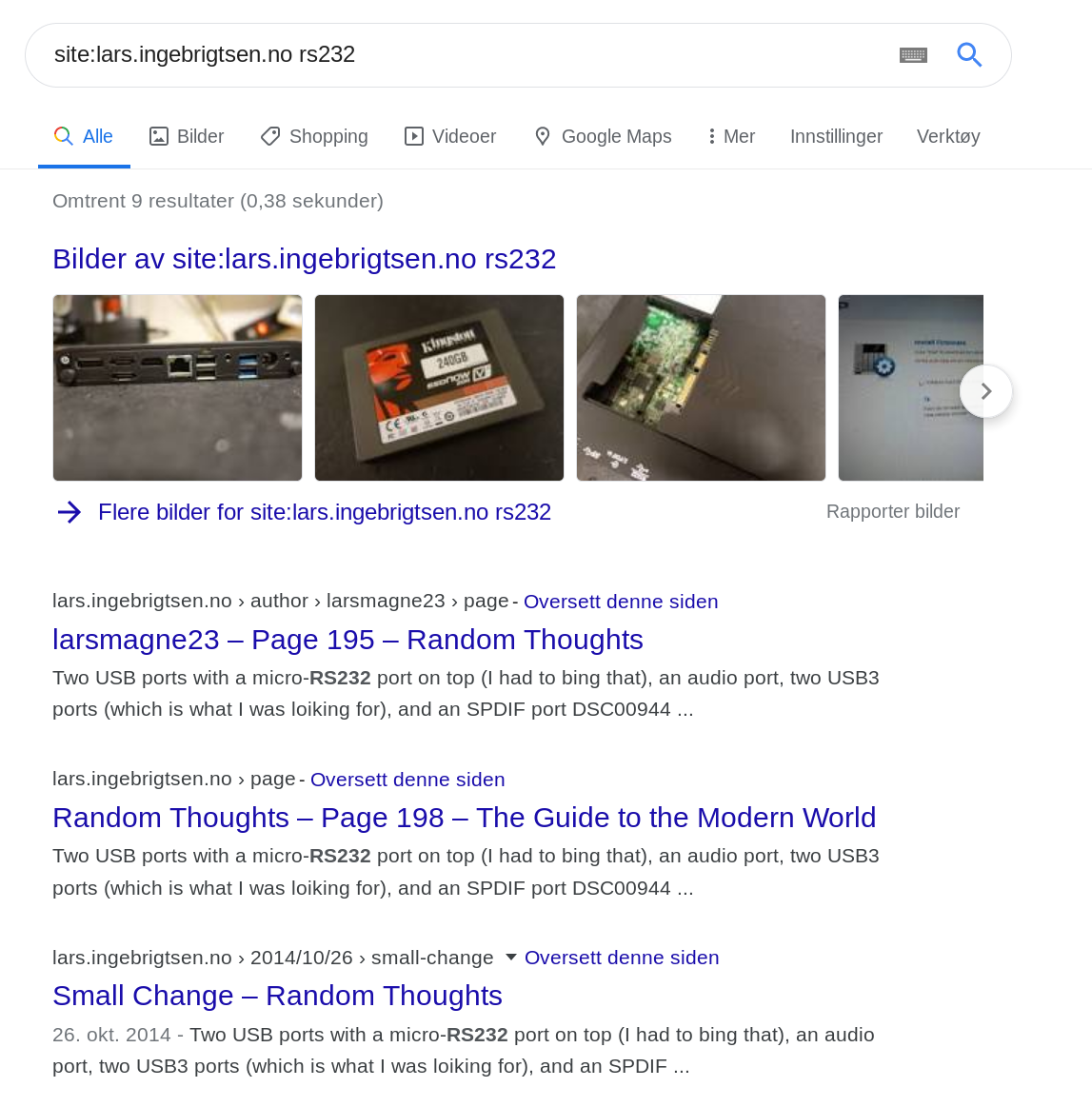

Lemme just use on of my own stupid hobby sites, Totally Epic, as an example:

OK, the first hit is nice, because it’s the front page. The rest of page one in the search results is all “page 14”, “category” pages and the like, none of which are pages that anybody searching for results are interested in.

The worst of these are the “page 14” links: WordPress, by default, does pagination by starting at the most recent article, and then counts backwards. So if you have a page length of five articles, the five most recent articles will be on the first page, then the next five articles are on “page 2”, and so on.

You know the problem with actually referring to these pages after the fact: What was once the final article on “page 2” will become the first article on “page 3” when the blog bloviator writes a new article: It pushes everything downwards.

So when you’re googling for whatever, and the answer is on a “page 14” link, it usually turns out not to be there, anyway. Instead it’s on “page 16”. Or “page 47”. Who knows?

Who can we blame for this sorry state of affairs? WordPress, sure; it’s sad that they don’t use some kind of permanent link structure for “pages”. Instead of https://totally-epic.kwakk.info/page/5/, the link could have been https://totally-epic.kwakk.info/articles/53-49/; i.e., the post numbers, or https://totally-epic.kwakk.info/date/20110424T042353-20110520T030245/ (a publication time range), or whatever. (This would mean that the pages could increase or shrink in size if the bloviator deletes or adds articles with a “fake” time stamp later, but whatevs?)

Can we also blame Google? Please? Can we?

Sure. There’s a gazillion blogs out there, and they basically all have this problem, and Google could have special-cased it for WordPress (remember that 30% thing? OK, it’s a dubious number) to rank these overview pages lower, and rank the individual articles higher. Because it’s those individual pages we’re interested in.

This brings us to a related thing we can blame Google for: They’re just not indexing obscure blogs as well as they used to. Many’s the time I’m looking for something I’m sure I’ve seen somewhere, and it doesn’t turn up anywhere on Google (not even on the Dark Web; i.e., page 2 of the search results). Here’s a case study.

But that’s an orthogonal issue: Is there something us blog bleeple can do to help with the situation, when both Google and WordPress are so uniquely useless in the area?

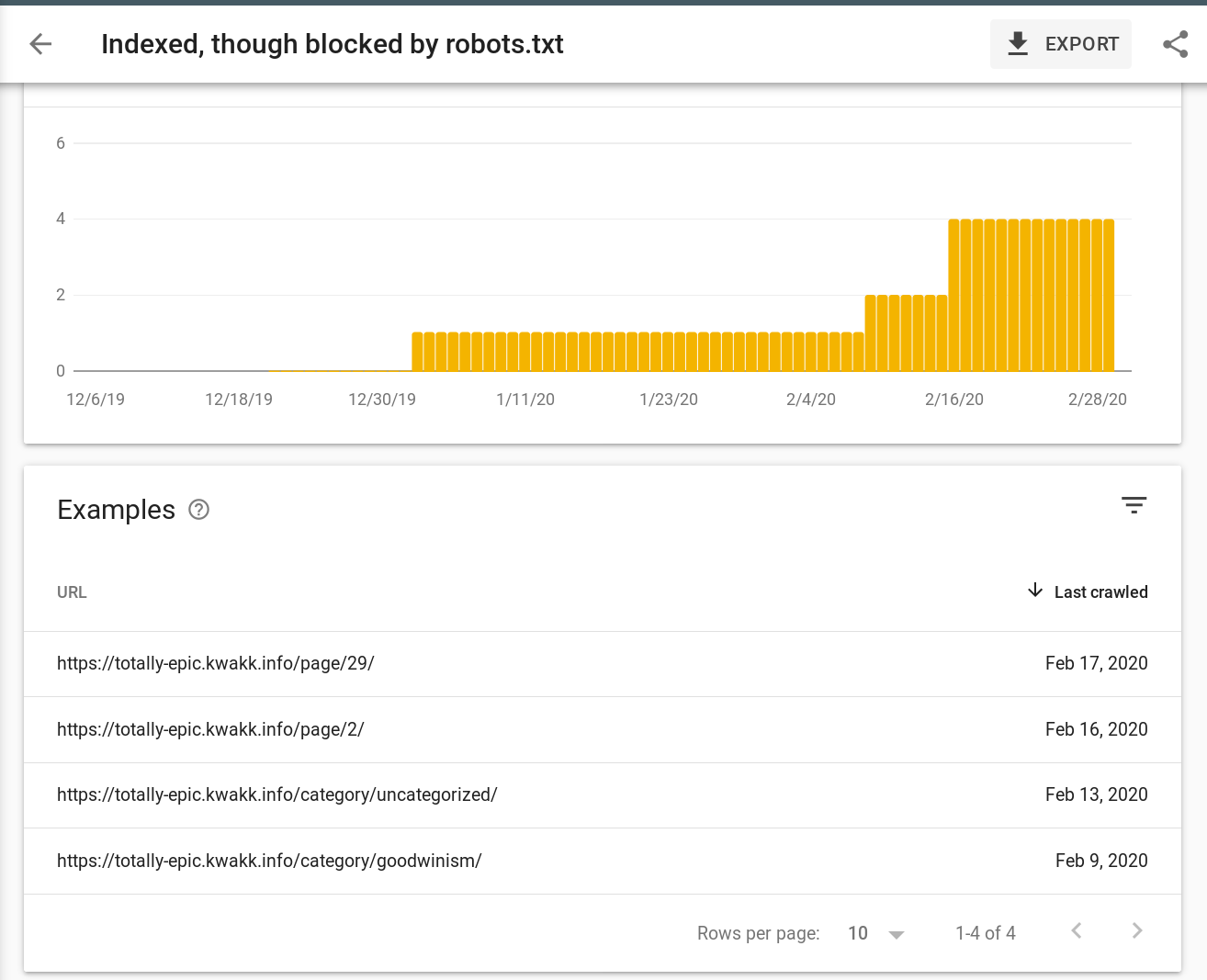

Uneducated as I am, I imagined that putting this in my robots.txt would help keep the useless results out of Google:

User-agent: * Disallow: /author/ Disallow: /page/ Disallow: /category/

Instead this just made my Google Search Console give me an alert:

Er, OK. I blocked it, but you indexed it anyway, and that’s something you’re asking me to fix?

You go, Google.

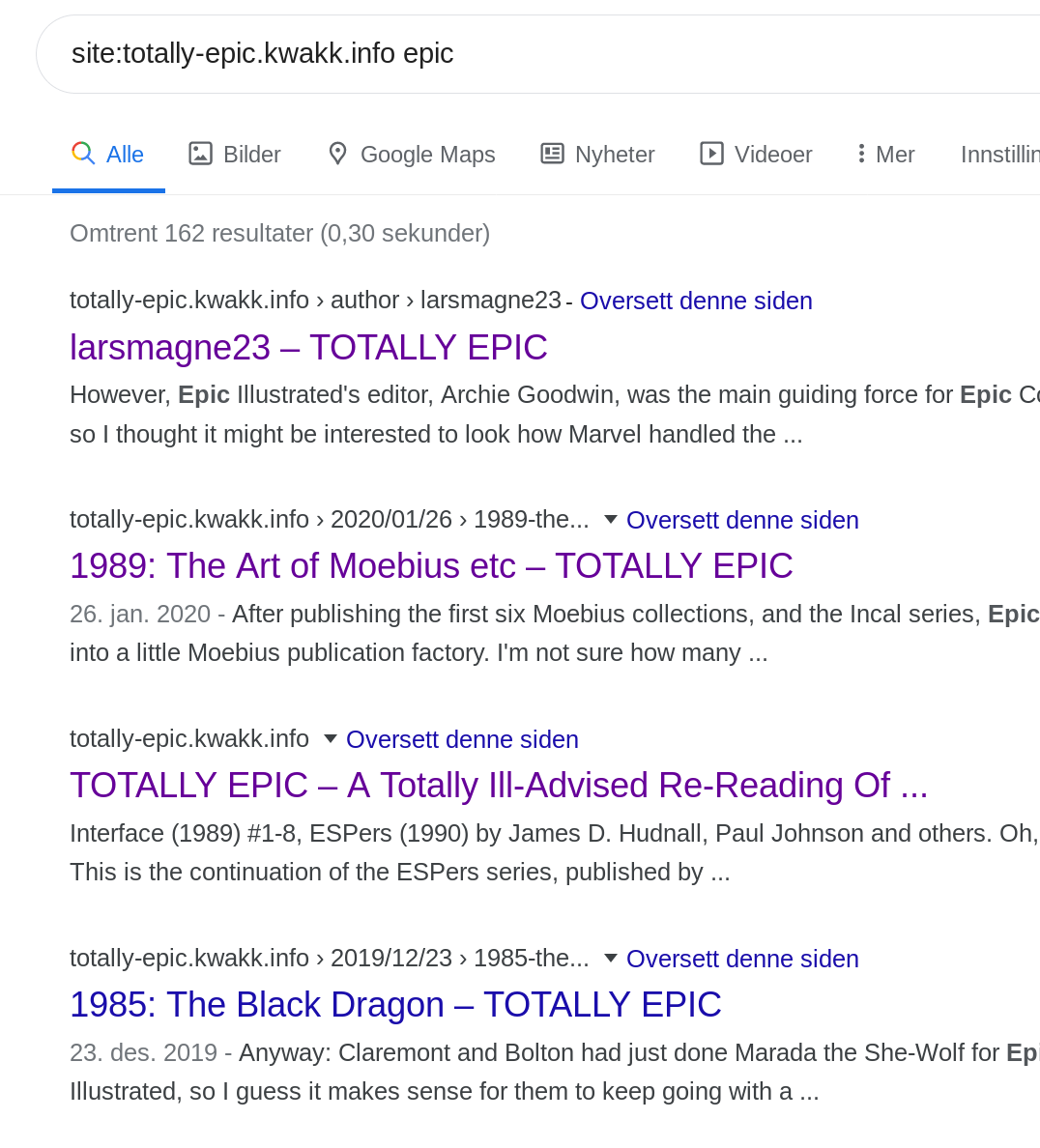

Granted, adding the robots.txt does seem to help with the ranking a bit: If you actually search for something now, you do get “real” pages on the first page of results:

The very first link is one of the “denied” pages, though, so… it’s not… very confidence-inducing.

Googling (!) around shows that Google is mostly using the robots.txt as a sort of hand-wavy hint as to what it should do because the Calironia DMV added a robots.txt file in 2006.

It … makes … some kind of sense? I mean, for Google.

Instead the edict from Google seems to be that we should use a robots.txt file that allows everything to be indexed, but include a

directive in the HTML to tell Google not to index the pages insead.

Fortunately, there’s a plugin for that. But googling for that isn’t easy, because whenever you’re googling for stuff like this you get a gazillion SEO pages about how to get more of your pages on Google, not less. Oh, and this plugin seems even better (that is, it allows you to control what pages to noindex more pretty well).

So I added this to that WordPress site on March 5th, and I wonder how long it’ll take for the pages in question to disappear from Google (if ever). I’ll update when/if that happens.

Still, this future is pretty sad. Instead of flying cars we have the “Robots “noindex,follow” meta tag” WordPress plugin.

[Edit one week later: No changes in the Google index so far.]

[Edit four weeks later: All the pagination pages now no longer show up in Google if I search for something (like “site:totally-epic.kwakk.info epic”), so that’s definitely progress. If I just search for “site:totally-epic.kwakk.info” without any query items, then they’ll show up anyway, but I guess that doesn’t really matter much, because nobody does that.]

One thought on “Search Index Cleanliness Is Next To Something”